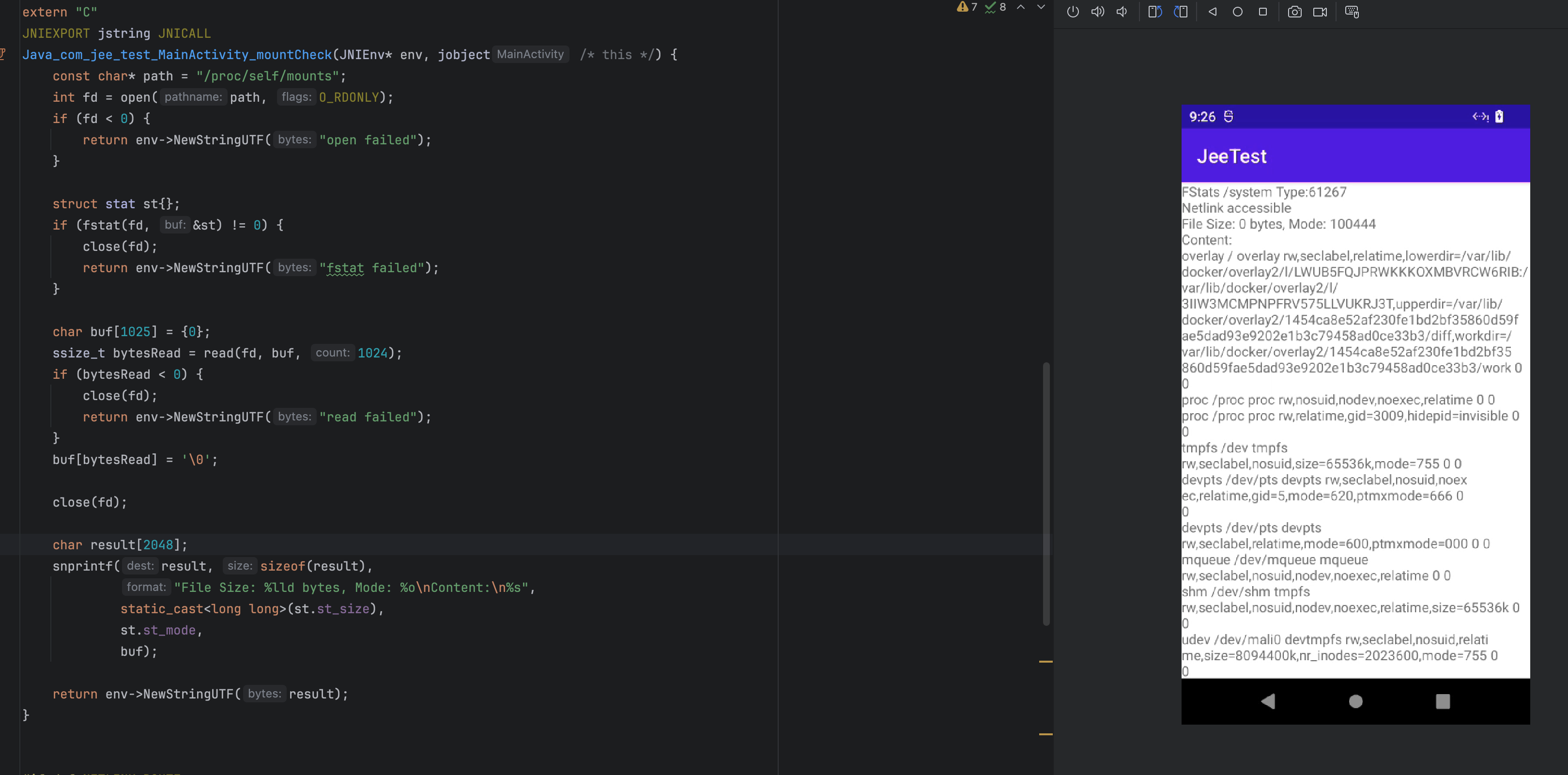

经过多轮修改 mount和mountinfo文件均无效果最终猜测应该是在native调用statfs函数检查挂载点,就像hunter检测overlayfs system

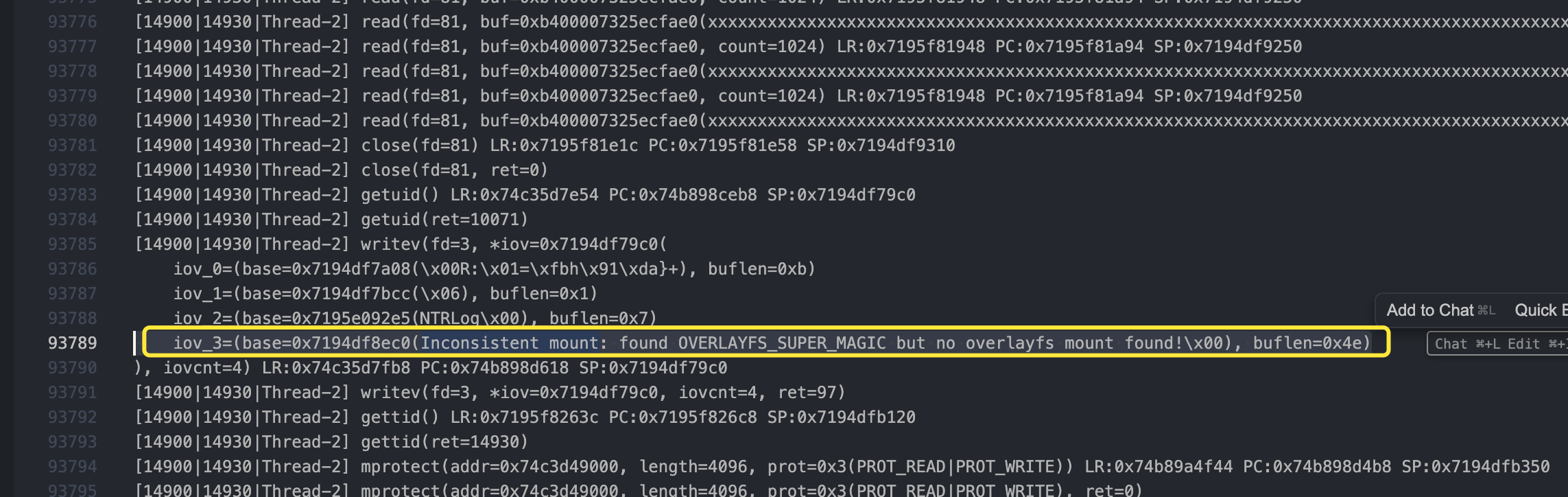

logcat有注意到在使用ebpf 替换open mountinfo打开路径之后还是把docker挂载路径获取到了

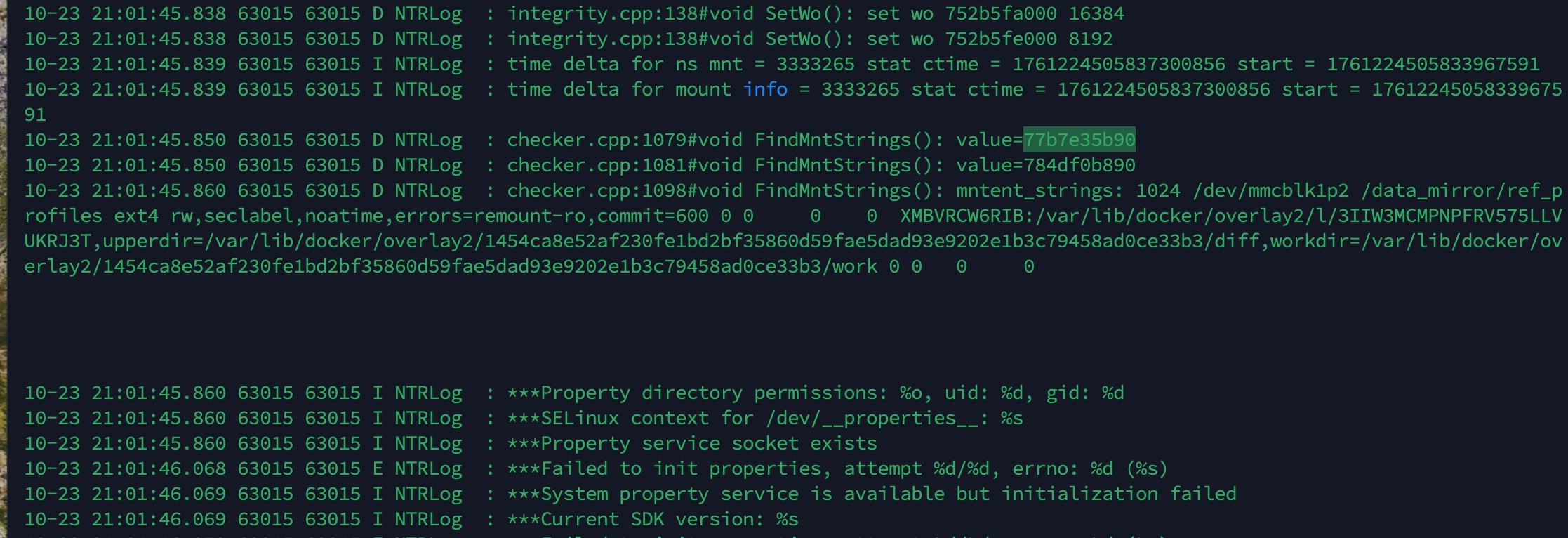

10-23 21:01:45.839 63015 63015 I NTRLog : time delta for ns mnt = 3333265 stat ctime = 1761224505837300856 start = 1761224505833967591

10-23 21:01:45.839 63015 63015 I NTRLog : time delta for mount info = 3333265 stat ctime = 1761224505837300856 start = 1761224505833967591

10-23 21:01:45.850 63015 63015 D NTRLog : checker.cpp:1079#void FindMntStrings(): value=77b7e35b90

10-23 21:01:45.850 63015 63015 D NTRLog : checker.cpp:1081#void FindMntStrings(): value=784df0b890

10-23 21:01:45.860 63015 63015 D NTRLog : checker.cpp:1098#void FindMntStrings(): mntent_strings: 1024 /dev/mmcblk1p2 /data_mirror/ref_profiles ext4 rw,seclabel,noatime,errors=remount-ro,commit=600 0 0 0 0 XMBVRCW6RIB:/var/lib/docker/overlay2/l/3IIW3MCMPNPFRV575LLVUKRJ3T,upperdir=/var/lib/docker/overlay2/1454ca8e52af230fe1bd2bf35860d59fae5dad93e9202e1b3c79458ad0ce33b3/diff,workdir=/var/lib/docker/overlay2/1454ca8e52af230fe1bd2bf35860d59fae5dad93e9202e1b3c79458ad0ce33b3/work 0 0 0 0 使用 ((20251020193849-nbkc51c "stackplz")) 追踪观察

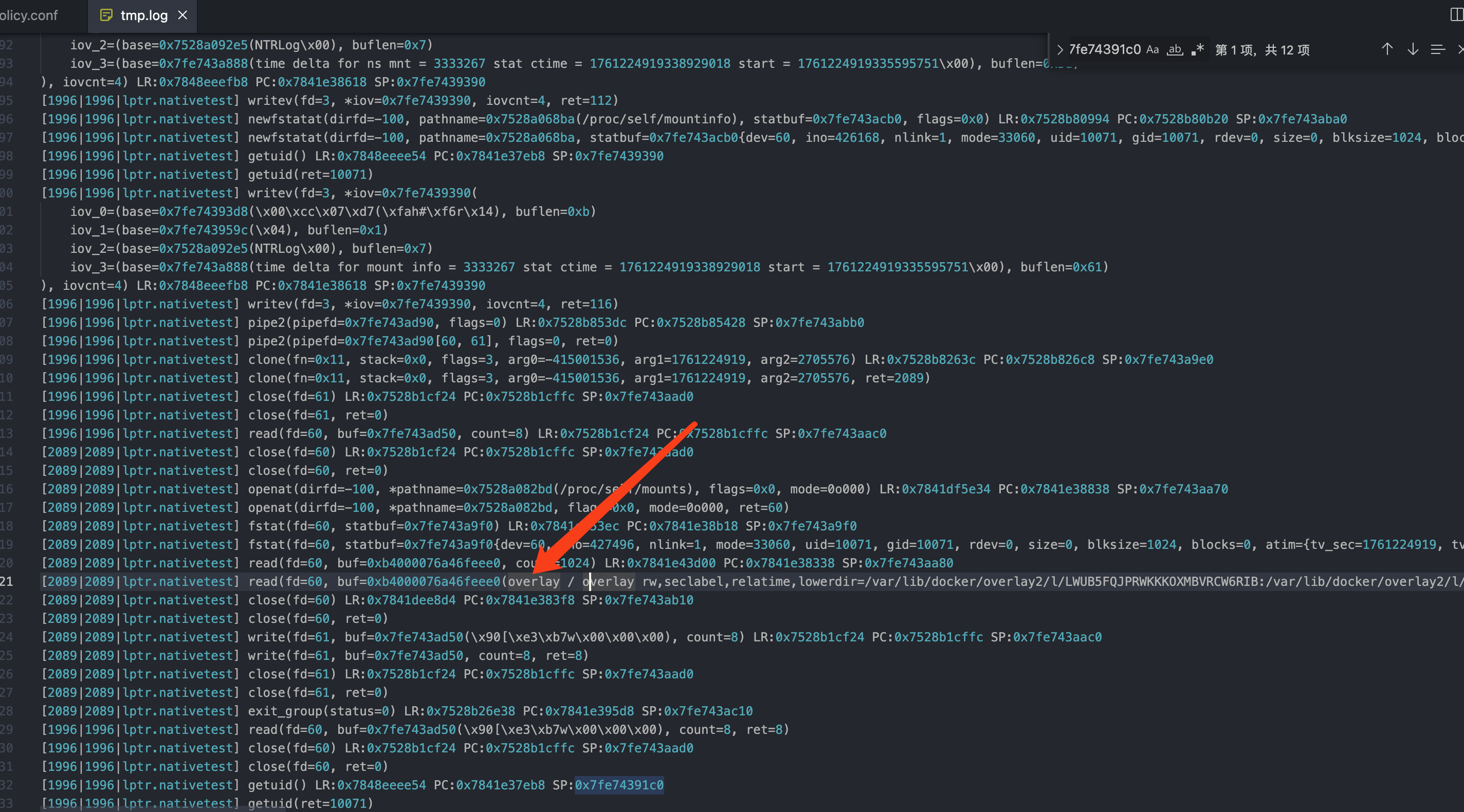

很明显 直接换openat的入参路径在这里没用 依旧正常打开 /proc/self/mounts native调用逻辑是 open -> fstat -> read

确实是这样 直接换路径在这里根本没用, 原因是path内存为只读状态,我们的path是const

那在eBPF层面的话 目前想到的也就只是在read exit阶段去替换了,在read exit阶段做的话后面还要改成从用户态读取数据返回

具体实现思路请看 https://eunomia.dev/zh/tutorials/27-replace/

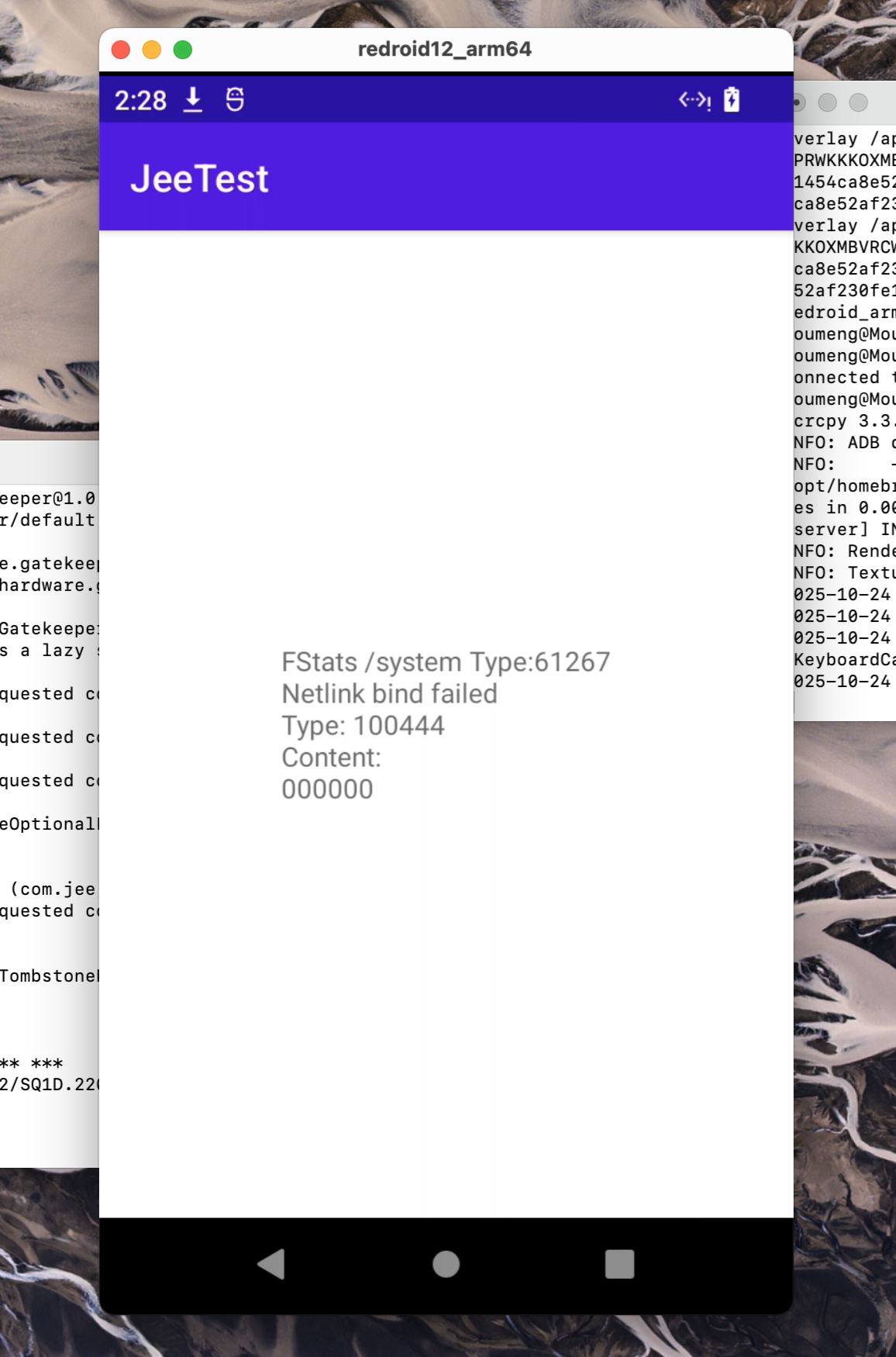

最后效果

#include <vmlinux.h>

#include <bpf/bpf_helpers.h>

#include <bpf/bpf_core_read.h>

struct {

__uint(type, BPF_MAP_TYPE_HASH);

__uint(max_entries, 8192);

__type(key, u32);

__type(value, unsigned int);

} map_fds SEC(".maps");

struct {

__uint(type, BPF_MAP_TYPE_HASH);

__uint(max_entries, 8192);

__type(key, u32);

__type(value, long unsigned int);

} map_buff_addrs SEC(".maps");

// 从后往前模糊匹配字符串

// pattern: 要匹配的模式字符串 (如 "mounts")

// target: 目标字符串 (如 "/proc/mounts")

// 返回: 如果 target 以 pattern 结尾则返回 true

static __always_inline bool str_ends_with(const char *target, const char *pattern)

{

int target_len = 0;

int pattern_len = 0;

// 计算target字符串长度

#pragma unroll

for (int i = 0; i < 256; i++) {

if (target[i] == '\0') {

target_len = i;

break;

}

}

// 计算pattern字符串长度

#pragma unroll

for (int i = 0; i < 256; i++) {

if (pattern[i] == '\0') {

pattern_len = i;

break;

}

}

// 如果模式字符串比目标字符串长,不可能匹配

if (pattern_len > target_len) {

return false;

}

// 从后往前比较

#pragma unroll

for (int i = 0; i < 256 && i < pattern_len; i++) {

int target_idx = target_len - 1 - i;

int pattern_idx = pattern_len - 1 - i;

if (target[target_idx] != pattern[pattern_idx]) {

return false;

}

}

return true;

}

SEC("tracepoint/syscalls/sys_enter_openat")

int tracepoint__syscalls__sys_enter_openat(struct trace_event_raw_sys_enter *ctx)

{

struct task_struct *task = (struct task_struct *)bpf_get_current_task();

unsigned int pid_ns = get_namespace_pid(task);

if(pid_ns != filter_ns){

return 0;

}

size_t pid_tgid = bpf_get_current_pid_tgid();

u32 pid = pid_tgid >> 32;

const char *fname = (const char *)ctx->args[1];

char filename[256];

bpf_probe_read_user_str(&filename, sizeof(filename), fname);

if (str_ends_with(filename, "mounts")) {

bpf_probe_write_user((char*)fname, "/data/local/tmp/mounts", 23);

unsigned int zero = 0;

bpf_map_update_elem(&map_fds, &pid_tgid, &zero, BPF_ANY);

return 0;

}

if (str_ends_with(filename, "mountinfo")) {

bpf_printk("PID: %d, NS_PID: %u, fname: %s\n", pid, pid_ns, filename);

bpf_probe_write_user((char*)fname, "/data/local/tmp/mounts", 23);

unsigned int zero = 0;

bpf_map_update_elem(&map_fds, &pid_tgid, &zero, BPF_ANY);

return 0;

}

return 0;

}

SEC("tracepoint/syscalls/sys_exit_openat")

int tracepoint__syscalls__sys_exit_openat(struct trace_event_raw_sys_exit *ctx)

{

size_t pid_tgid = bpf_get_current_pid_tgid();

int pid = pid_tgid >> 32;

unsigned int* pfd = bpf_map_lookup_elem(&map_fds, &pid_tgid);

if (pfd == 0) {

return 0;

}

struct task_struct *task = (struct task_struct *)bpf_get_current_task();

unsigned int pid_ns = get_namespace_pid(task);

if(pid_ns != filter_ns){

return 0;

}

unsigned int fd = (unsigned int)ctx->ret;

bpf_map_update_elem(&map_fds, &pid_tgid, &fd, BPF_ANY);

return 0;

}

SEC("tracepoint/syscalls/sys_enter_read")

int handle_read_enter(struct trace_event_raw_sys_enter *ctx)

{

size_t pid_tgid = bpf_get_current_pid_tgid();

int pid = pid_tgid >> 32;

unsigned int* pfd = bpf_map_lookup_elem(&map_fds, &pid_tgid);

if(!pfd){

return 0;

}

unsigned int map_fd = *pfd;

unsigned int fd = (unsigned int)ctx->args[0];

if (map_fd != fd) {

return 0;

}

long unsigned int buff_addr = ctx->args[1];

bpf_map_update_elem(&map_buff_addrs, &pid, &buff_addr, BPF_ANY);

bpf_printk("[openat_exit] PID: %d, buff_addr: %p\n", pid, buff_addr);

return 0;

}

SEC("tp/syscalls/sys_exit_close")

int handle_close_exit(struct trace_event_raw_sys_exit *ctx)

{

size_t pid_tgid = bpf_get_current_pid_tgid();

int pid = pid_tgid >> 32;

unsigned int* check = bpf_map_lookup_elem(&map_fds, &pid_tgid);

struct task_struct *task = (struct task_struct *)bpf_get_current_task();

unsigned int pid_ns = get_namespace_pid(task);

if(pid_ns != filter_ns){

return 0;

}

bpf_map_delete_elem(&map_fds, &pid_tgid);

bpf_map_delete_elem(&map_buff_addrs, &pid_tgid);

return 0;

}

SEC("tracepoint/syscalls/sys_exit_read")

int handle_read_exit(struct trace_event_raw_sys_exit *ctx)

{

size_t pid_tgid = bpf_get_current_pid_tgid();

long unsigned int* pbuff_addr = bpf_map_lookup_elem(&map_buff_addrs, &pid_tgid);

if (pbuff_addr == 0) {

return 0;

}

int pid = pid_tgid >> 32;

long unsigned int buff_addr = *pbuff_addr;

if (buff_addr <= 0) {

return 0;

}

if (ctx->ret <= 0) {

return 0;

}

size_t count = (size_t)ctx->ret;

#define MAX_WRITE_SIZE 1024

if (count > MAX_WRITE_SIZE) {

count = MAX_WRITE_SIZE;

}

for(size_t i = 0; i < MAX_WRITE_SIZE && i < count; i++) {

bpf_probe_write_user((char*)buff_addr + i, "x", 1);

}

bpf_printk("[read_exit] PID: %d, buff_addr: %p, count: %lu\n", pid, buff_addr, count);

return 0;

}

char LICENSE[] SEC("license") = "GPL";

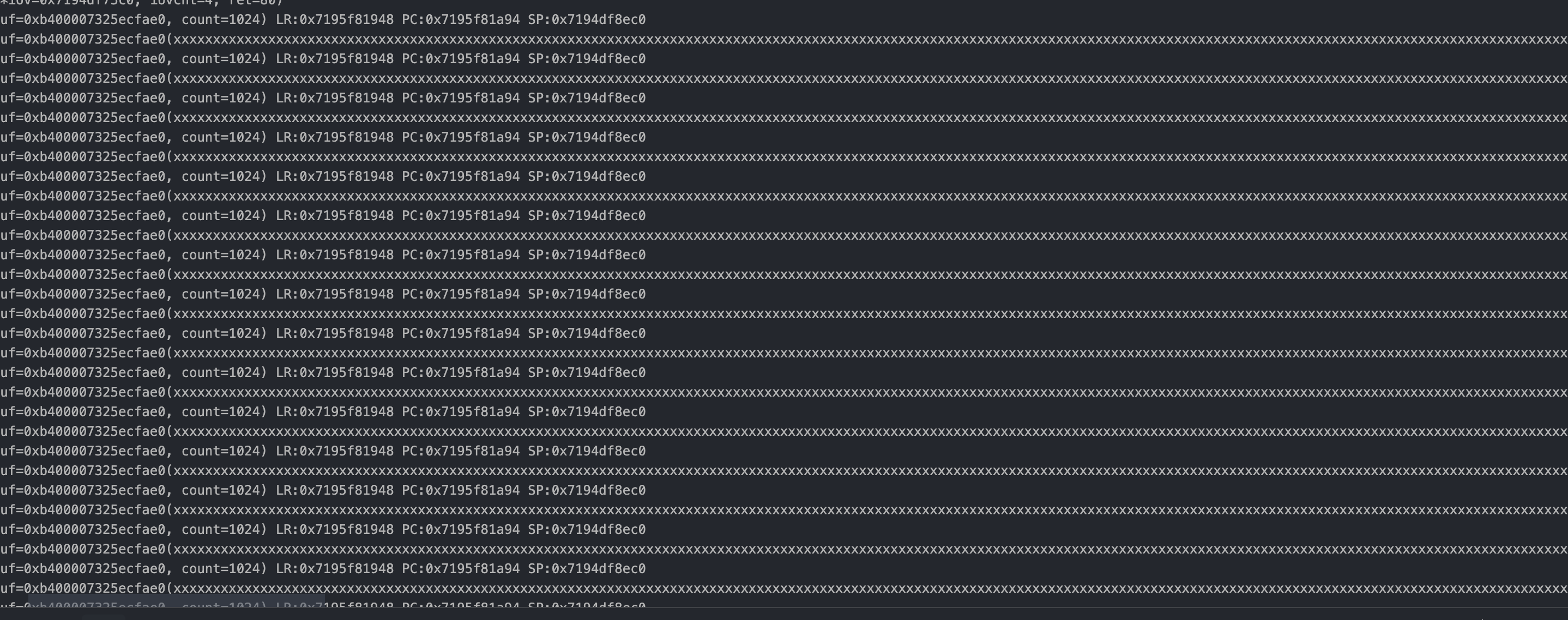

很明显 可以了 剩下要做的就是想办法把这 count=1024 里的数据全都给换成自己的(是时候学ebpf用户态了)